The cloud.

The glorious cloud.

It lets us rack up large bullsh*t bills,

it makes sure that we are always in the wrong teir,

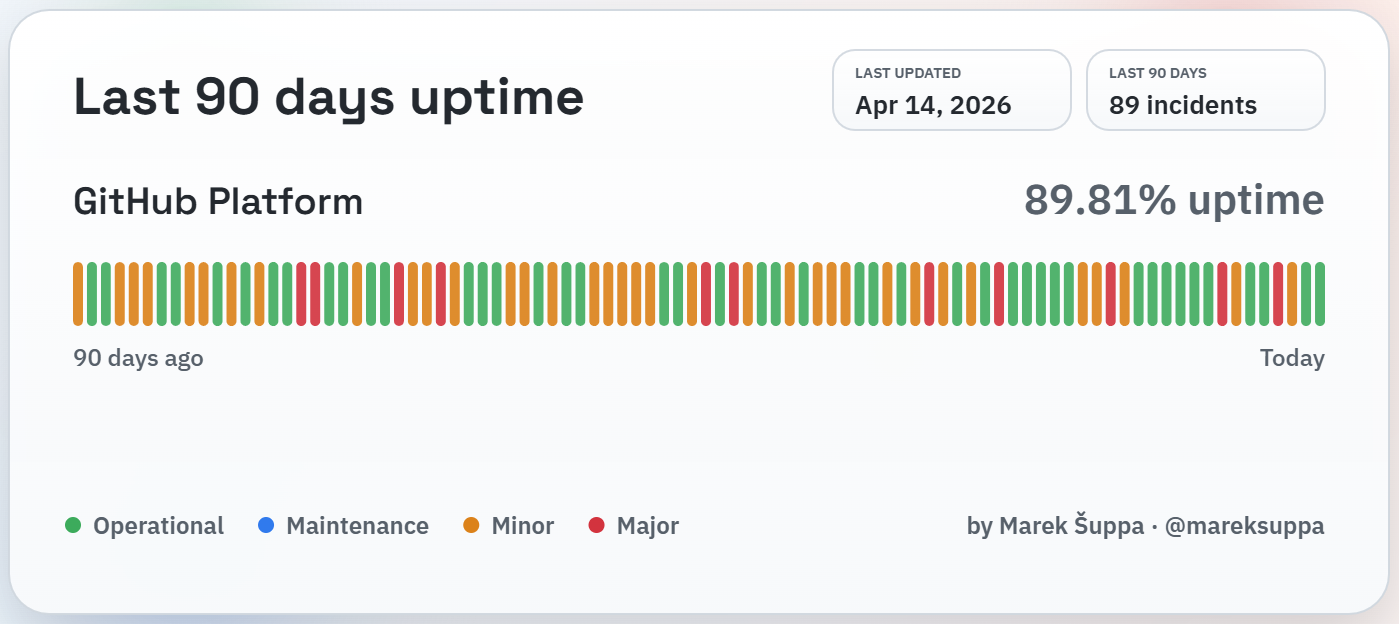

but most importantly, it ensures 99.999% 89.81% uptime.

So the cloud sucks, obviously. But why do we use it?

There are people with SaaS products who pretend that they will need to be able to automatically scale over-night to 1,000,000 active users (from their normal 10 (because I’m sure you can x100,000 your userbase overnight)). But these people are, to me, are mythical creatures. I don’t interact with software devs in my real life (besides myself) but I still get the cloud forced down my throat, even in IT.

But why? The reasons I’ve been given are all made-up.

- “It will be easier to maintain” (Until literally anything goes wrong)

- “It’s the future” (Why do we have to band-wagon?)

- “It will be cheaper” (Literally how?)

On price

People who say “the cloud is cheaper” are basically always lieing. The reason they think they aren’t lieing, is because they are buying enterprise server hardware. For the uninitiated; the difference between consumer and enterprise hardware, is about a 4x price difference, with a marginal stability increase. Let’s run a quick example, and pretend you are a small company, less than 500 employees, and need a server to do DHCP, DNS, Active Directory, and perhaps some internal reporting.

ThinkSystem ST50 V3 Tower Server

- 8 Cores @ 2.7 GHz

- 16GB DDR5 ECC RAM @ 5600MHz

- 2TB 3.5” HDD, 6GB/s

- Cost: $4310.80 (they “estimate” that the normal “market value” of this configuration is $10,777.02, for whatever that’s worth.)

What does this give you that a $500 PC can’t give you?

- IPMI

- ECC RAM

Before you can answer the question of “is IPMI and ECC worth $3800?”, you should know what they each are.

IPMI

- What: Remote access to the computer, independent of the host operating system (Windows/Linux/BSD).

- Why: If Windows/Linux/etc were to totally freeze up, you can restart the machine remotely, without having to walk over to the machine.

This same functionality can be replicated for somewhere around $100. But in most cases can just be forgone entirely.

ECC RAM

- What: Your RAM holds all the information about the processes that are currently running on your computer. ECC makes sure that all this data doesn’t get corrupted by particles flying through space. If one of these particles hits the RAM in just the right way, it can cause a bit-flip; a 1 becoming a 0 or vise versa.

- Why: In very critical or large workloads, such as financial data processing, you need to be absolutely sure that the data your computer is using is what it should be, and that no numbers changed. In non-critical workloads (such as the ones we are targeting) a bit-flip can range from changing nothing, to at worst, causing the system to crash. After this crash you then restart the machine are are good to go as if nothing happened.

Some people such as Linus Torvalds (creator of Linux, git, etc) suggests that every computer should have ECC ram, just to increase computer’s stability. This is probably a reasonable take.

So we can see that IPMI is pretty useless (for our workload) and ECC is nice but not required. If we were to bump up our budget a little from $500 we could buy some hardware that is a generation or two older, but would have ECC RAM.

I would hope that any competent IT person already knows all of this information. So why do they keep spending money on:

A. Expensive on-prem servers B. Not cost efficient cloud servers

To have someone else to blame. If your internal service goes down, your boss, or someone in a similar high-up position will loudly complain about how there is downtime, and they can’t do anything right now, and that this is all because you wanted to just penny pinch (even though the cost of 30 min of down time is significantly less than the cost difference between the other options). So instead of weathering the storm of blame when something goes wrong once a year, all of IT has decided to use fancy hardware. That way if something goes wrong, they have successfully covered their ass. If our product is in the cloud, and the cloud goes down, then that is an Azure/AWS/DigialOcean problem, and IT can shift the blame to them. If we run the product locally and it goes down, it’s now IT’s fault.

So it looks like we spend $1000s/year just to not get blamed if something were to happen. What a wonderful use of money.

Additional Notes

-

The costs shown here aren’t even including costs of running bullsh*t software. IT admins could be running Inucs or Proxmox, but those are untested solutions, which is very scary! If something were to happen then they could get blamed for using untested scary solutions and be chastised that they should’ve gone with the “industry standard”.

-

“Industry Standard” software just means that it is the company who gives the best vibes and makes you feel warm and fuzzy inside when you ask them what they guarantees. For example; AWS “guaranteed” some absurdly high uptime. But then they go down multiple times in 2025. So it was all a lie. But at least the marketing made us feel good about ourselves!